X3DH

X3DH (Extended Triple Diffie-Hellman) — An Interactive Distributed Systems Deep Dive

Audience: engineers who already speak “distributed systems” and want to understand X3DH as a real-world, failure-prone, adversarial protocol running over unreliable networks.

Challenge: “You’re offline. I’m online. We need end-to-end encryption anyway.”

Scenario

You operate a global messaging service. Users roam between networks, devices sleep, servers restart, and attackers can record traffic forever.

Alice wants to start an encrypted conversation with Bob right now, but Bob is offline.

Constraints:

- Alice and Bob have never spoken before.

- They might not be online at the same time.

- The server is untrusted for confidentiality (it can store/relay but shouldn’t learn message contents).

- The network is asynchronous: messages can be delayed, reordered, replayed.

- The attacker can compromise keys later and still tries to decrypt old traffic (post-compromise record-and-decrypt).

Pause and think: What do you need besides “Diffie-Hellman” to make this work when Bob is offline?

Explanation (coffee shop analogy)

Imagine a coffee shop that lets customers leave sealed envelopes in lockers.

- Bob leaves a set of one-time padlocks (one-time keys) and a master lock (long-term identity key) at the shop.

- Alice comes later, grabs one locker’s contents, and uses them to create a shared secret with Bob—without Bob present.

The shop (server) can see which locker Alice opened, but should not be able to open the envelopes.

Real-world parallel

This is exactly the “asynchronous key agreement” problem in modern E2EE messengers (e.g., Signal). X3DH is the protocol that bootstraps a secure shared secret when parties are not simultaneously online.

[KEY INSIGHT] X3DH is a distributed protocol for asynchronous authenticated key agreement using a server as a mailbox for prekeys.

Section challenge question: If Bob never comes online again, can Alice still send encrypted messages? What property is missing?

What X3DH is (and what it isn’t)

Scenario

Your teammate says: “X3DH is the Double Ratchet.” Another says: “X3DH gives forward secrecy forever.” A third says: “X3DH is just three DH computations.”

Pause and think: Which of these statements are true?

Which statement is true?

Pick one:

- X3DH is the same as the Double Ratchet.

- X3DH is a one-time handshake that outputs a shared secret used to start the Double Ratchet.

- X3DH alone provides ongoing forward secrecy for the whole conversation.

Pause and think...

Answer (reveal):

- True: 2.

- False: 1. Double Ratchet is the post-handshake message key evolution mechanism.

- False: 3. X3DH provides initial authentication and some forward secrecy properties (especially via one-time prekeys), but ongoing FS and break-in recovery come from the Double Ratchet.

Mental model

- X3DH = “getting into the secure room.”

- Double Ratchet = “moving through hallways with doors that lock behind you.”

Real-world parallel

Think of X3DH as verifying IDs at the building entrance and receiving a temporary access badge. Double Ratchet is what happens inside: each door locks behind you; if someone steals your badge later, they still can’t open doors you already passed.

[KEY INSIGHT] X3DH is a bootstrap protocol: it authenticates identities and derives an initial shared secret (the “root key seed”).

Section challenge question: Why does separating “bootstrap” (X3DH) from “ongoing key evolution” (Double Ratchet) help in distributed environments?

The cast of keys (who stores what, where)

Scenario

You’re implementing X3DH. You must decide which keys live:

- only on device

- on server

- both

And what happens when users have multiple devices.

Pause and think: If the server is untrusted, what keys can it store without breaking confidentiality?

Explanation (restaurant prep analogy)

A restaurant (server) can store:

- ingredients sealed in jars (public keys)

- prepared meals in locked containers (encrypted messages) But it must never hold:

- the chef’s secret spice mix (private keys)

X3DH key types (Signal-style)

X3DH uses these key pairs (typically Curve25519):

- IK (Identity Key): long-term identity key pair

IK_A,IK_B(public);ik_a,ik_b(private)

- SPK (Signed PreKey): medium-term prekey pair, signed by IK

SPK_B(public);spk_b(private)

- OPK (One-Time PreKey): one-time use prekey pairs (optional but strongly recommended)

OPK_B[i](public);opk_b[i](private)

- EK (Ephemeral Key): generated by initiator for the session

EK_A(public);ek_a(private)

Server stores for Bob:

IK_B(public)SPK_B(public) + signatureSig_IK_B(SPK_B)- a set of

OPK_B[i](public), each to be handed out at most once

Client stores:

- Private keys (

ik_b,spk_b,opk_b[i]) only on Bob’s device

[KEY INSIGHT] The server is a public-key bulletin board plus a one-time token dispenser.

Key type -> distributed systems role

Match each key to its role:

A. IK (Identity Key) B. SPK (Signed PreKey) C. OPK (One-Time PreKey) D. EK (Ephemeral Key)

- Prevents server from mounting undetectable MITM by swapping long-term identity.

- Enables asynchronous handshakes without requiring Bob online; rotated periodically.

- Improves forward secrecy against compromise of Bob’s prekey material later; consumed per session.

- Ensures each session has fresh entropy from the initiator.

Pause and think...

Answer: A->1, B->2, C->3, D->4.

Section challenge question: In a multi-device world, do you have one IK per user or per device? What distributed trade-offs follow?

The handshake goal in one sentence

Scenario

You want a crisp spec-level goal for X3DH.

Pause and think: What exactly should Alice and Bob end up with after X3DH?

Explanation

They should derive the same high-entropy shared secret SK (or root key seed) such that:

- Only Alice and Bob can compute it.

- Alice is assured she’s talking to Bob’s identity key (authentication).

- If certain keys are compromised later, past session secrets remain safe (to a degree—see failure analysis).

Real-world parallel

Like leaving a note in a locker with a combination that only the intended recipient can reconstruct using their keys.

[KEY INSIGHT] X3DH produces one shared secret that seeds a secure channel (usually Double Ratchet).

Section challenge question: If the server gives Alice the wrong prekey bundle, can Alice detect it immediately? Under what assumptions?

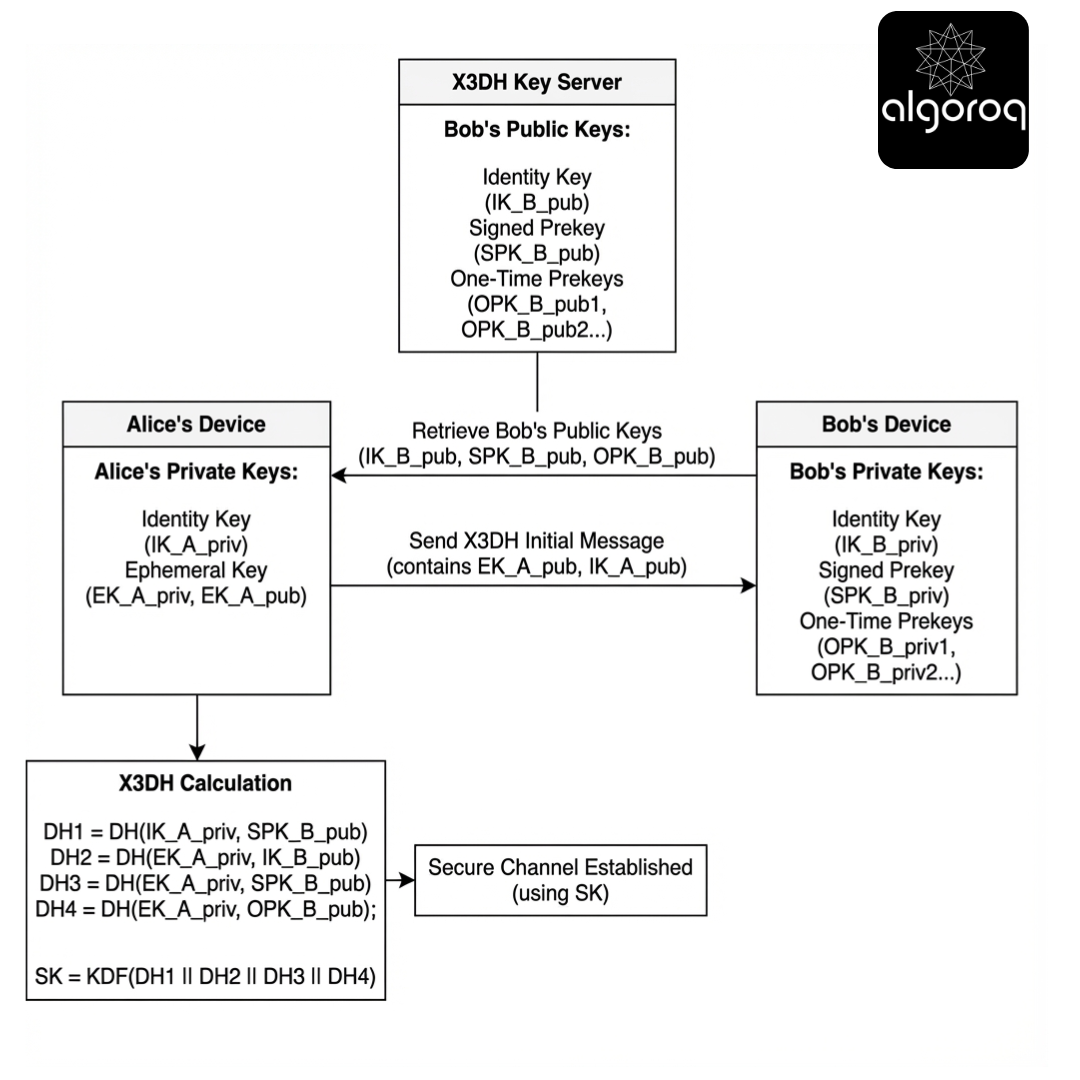

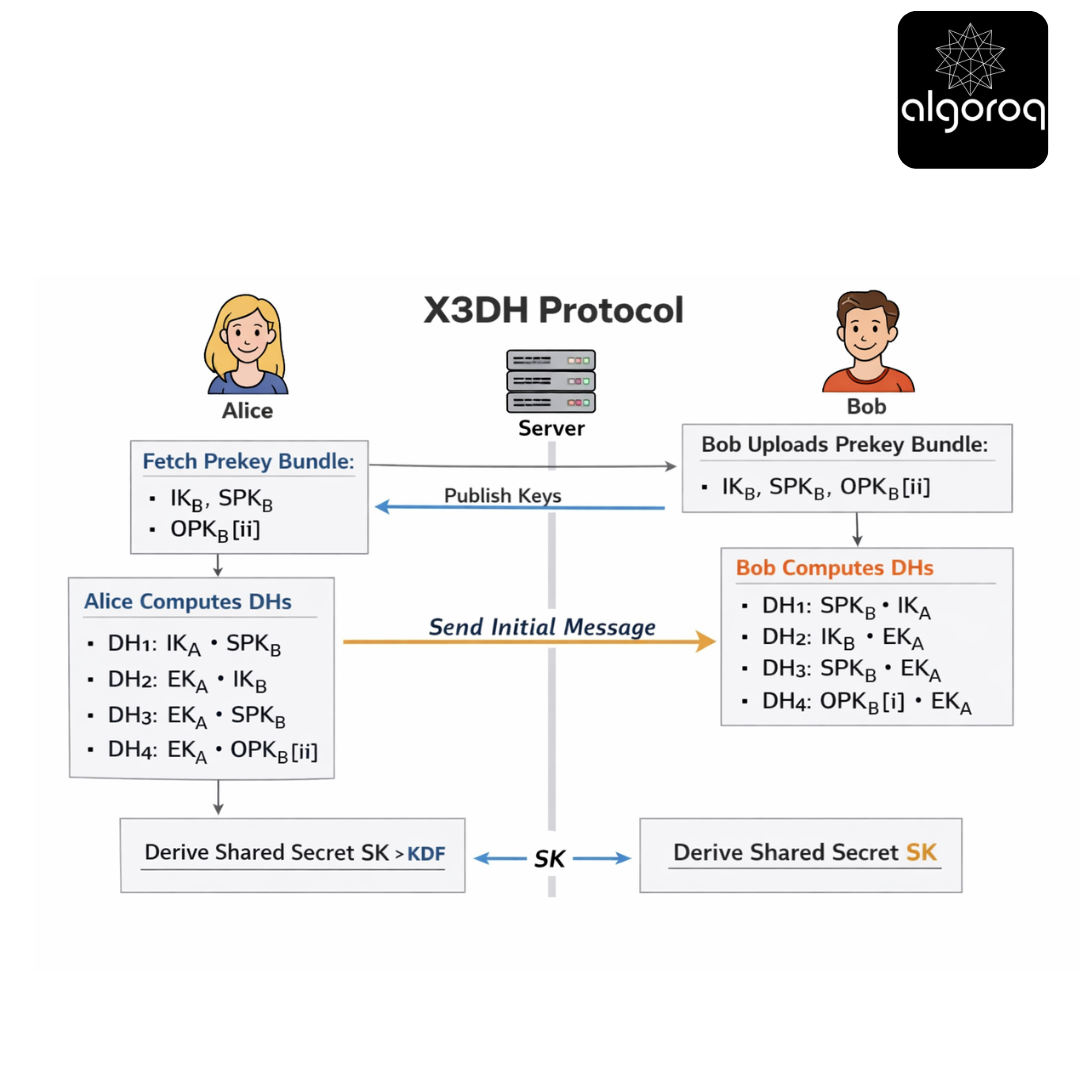

X3DH message flow (the “mailbox handshake”)

Scenario

Alice wants to start a session with Bob while Bob is offline.

Step-by-step (progressive reveal)

Step 0: Bob publishes a prekey bundle

Bob uploads to server:

IK_B(public)SPK_B(public)- signature

Sig = Sign(ik_b, SPK_B) - a set of one-time prekeys

OPK_B[0..n](public)

Server stores them and hands them out to initiators.

Pause and think: Why is SPK_B signed by IK_B?

Answer (reveal): So Alice can verify the SPK_B she got is bound to Bob’s long-term identity, preventing a server MITM that swaps SPK_B.

Step 1: Alice fetches Bob’s prekey bundle

Alice requests a bundle from server, receives:

IK_B,SPK_B,Sig, and optionally oneOPK_B[i]

Alice verifies Sig using IK_B.

Step 2: Alice generates an ephemeral key

Alice generates EK_A.

Step 3: Alice computes multiple DH outputs

X3DH combines several DH computations:

DH1 = DH(IK_A_private, SPK_B_public)DH2 = DH(EK_A_private, IK_B_public)DH3 = DH(EK_A_private, SPK_B_public)DH4 = DH(EK_A_private, OPK_B_public)(optional; only if OPK present)

These are concatenated and fed into a KDF:

SK = KDF(DH1 || DH2 || DH3 || DH4)(with domain separation and context)

Pause and think: Why multiple DHs instead of one?

Answer (reveal): To combine authentication (identity keys) with freshness (ephemeral keys) and to incorporate Bob’s prepublished keys in a way that survives asynchronous delivery. Multiple DHs also improve security across compromise scenarios.

Step 4: Alice sends an initial message to Bob

Alice sends to Bob (via server):

IK_A(public identity)EK_A(public ephemeral)- identifiers for which

SPK_BandOPK_B[i]were used - an encrypted “initial payload” using keys derived from

SK

Bob later receives this and computes the same DHs with his private keys.

[KEY INSIGHT] The server is on the critical path for availability but not for confidentiality (assuming correct signature verification and key handling).

Section challenge question: If the server replays the same OPK_B[i] to two different Alices, what breaks? What still holds?

Distributed systems reality—what can go wrong?

Scenario

You deploy globally. Things fail. Attackers also exist. You need an operational model.

We’ll walk through failure classes:

- benign distributed failures (delay, reorder, duplication)

- server misbehavior (malicious or buggy)

- client state loss (reinstall, device reset)

- concurrency and multi-device

Failure class 1: Message delay/reordering

Pause and think: If Bob rotates SPK_B every week, what happens if Alice fetched an old bundle and sends her initial message after rotation?

Explanation

Bob must keep old signed prekeys around long enough to decrypt/complete handshakes initiated with them. This becomes a state-retention and garbage-collection problem.

Restaurant analogy:

- Bob changes the daily menu (rotates SPK).

- Customers who picked up yesterday’s menu and ordered today should still get served (for a grace period).

Distributed systems parallel This is like supporting old versions in a rolling upgrade: you need compatibility windows.

[KEY INSIGHT] Prekey rotation introduces an availability vs. state-retention trade-off.

Challenge question: How long should Bob retain old SPKs? What signals could guide this (traffic patterns, max delivery delay, legal retention)?

Failure class 2: Server duplicates or replays one-time prekeys

Scenario

The server is supposed to hand out each OPK_B[i] at most once. But the server is buggy, partitioned, or malicious.

Pause and think: What property does OPK primarily provide, and what happens if it’s reused?

Explanation

OPK improves forward secrecy against compromise of Bob’s long-term keys and signed prekey. If OPK is reused, two sessions might incorporate the same OPK; this can reduce some guarantees and can enable correlation.

Delivery-service analogy:

- OPK is a “single-use delivery code.”

- If the dispatcher reuses the same code for two deliveries, it’s easier to correlate deliveries and increases the blast radius if the code leaks.

Operational mitigation

- Server-side: atomic “pop” semantics for OPKs.

- Client-side: Bob logs which OPKs were used (by id) and can detect reuse when receiving handshakes.

[KEY INSIGHT] OPKs are a distributed consumable resource. Correctness requires at-most-once allocation or at least detection.

Challenge question: In a geo-replicated prekey store, what consistency level is needed to prevent OPK double-issue? What’s the latency cost?

Failure class 3: Server swaps identity keys (MITM attempt)

Scenario

A malicious server tries to give Alice a different IK_B (attacker-controlled) so it can intercept.

Pause and think: Can X3DH prevent this by itself?

Explanation

X3DH authenticates the signed prekey to the identity key it sees. But if the server can replace IK_B and SPK_B together with attacker keys, Alice will verify the signature—of the attacker’s identity.

So how do real systems handle this?

- Trust on first use (TOFU): cache

IK_Bafter first contact; warn on change. - Out-of-band verification: safety numbers / QR code.

- Transparency logs: key directory with auditability.

Coffee shop analogy:

- If the coffee shop gives you “Bob’s locker key” but it’s actually “Mallory’s locker key,” you’ll happily lock secrets to Mallory.

[KEY INSIGHT] X3DH assumes a mechanism to bind identities to public identity keys beyond what the server can arbitrarily rewrite.

Challenge question: What distributed system would you build to make identity keys globally consistent and auditable (gossip, transparency log, witness cosigning)?

Failure class 4: Client state loss (Bob reinstalls)

Scenario

Bob reinstalls the app and loses ik_b.

Pause and think: What happens to messages sent to Bob while he was offline?

Explanation

Without the private identity key and prekey private material, Bob can’t compute the same DH values; messages become undecryptable.

Distributed systems parallel:

- This is like losing the only copy of a decryption key: the data is intact but unrecoverable.

Mitigations:

- Secure backup of identity keys (controversial; introduces new trust and threat models).

- Multi-device key escrow within user-controlled trust domain.

[KEY INSIGHT] End-to-end encryption turns “state loss” into “data loss.” Availability becomes a key-management problem.

Challenge question: If you add encrypted cloud backups for identity keys, what new attack surface have you introduced?

Failure class 5: Multi-device and concurrency

Scenario

Bob has three devices: phone, tablet, desktop. Alice starts a chat. Which device’s prekeys should she use?

Pause and think: What’s the simplest correct model?

Explanation

Common approach: treat each device as its own X3DH identity (device-specific IK/SPK/OPKs). Alice performs X3DH with each device and then fan-outs encrypted messages.

Restaurant analogy:

- Bob has three pickup locations. You need to deliver a sealed package to each.

Trade-off:

- More handshakes, more server storage, more metadata.

- But clearer security boundaries.

[KEY INSIGHT] Multi-device E2EE often becomes “multi-recipient encryption,” where each device is a recipient with its own prekey bundle.

Challenge question: How do you handle device addition/removal without letting a malicious server silently add a new device as a recipient?

Security properties as distributed invariants

Scenario

You’re writing an internal design doc. You need crisp invariants.

Property -> meaning in X3DH context

Properties: A. Authentication B. Forward secrecy (initial) C. Post-compromise security D. Asynchrony tolerance E. Deniability (nuanced)

Meanings:

- If Bob’s long-term key is compromised later, past session secrets are still protected to some extent (especially if OPKs were used and erased).

- Alice can initiate while Bob is offline using prepublished public keys.

- After a device compromise, future messages can become secure again without restarting the conversation.

- Alice’s derived secret is cryptographically bound to Bob’s identity public key (subject to key distribution assumptions).

- Transcript doesn’t provide strong cryptographic proof to third parties that Alice talked to Bob (depends on system design).

Pause and think...

Answer: A->4, B->1, C->3 (mostly Double Ratchet, not X3DH), D->2, E->5.

Mental model

Treat these as distributed invariants:

- Authentication is an invariant about who you’re connected to.

- Asynchrony tolerance is an invariant about when parties are online.

- Forward secrecy is an invariant about time and compromise.

[KEY INSIGHT] X3DH mainly covers authentication + asynchrony tolerance, and contributes partially to initial forward secrecy; it does not replace the ratchet.

Challenge question: Which invariant is hardest to ensure when the server is malicious: authentication, asynchrony, or forward secrecy?

Walk the protocol like an SRE (incident tabletop)

Scenario

You’re on-call. Users report: “Sometimes first messages can’t be decrypted.” Metrics show increased handshake failures during regional outages.

Pause and think: What distributed failure could cause this that isn’t “crypto broke”?

Likely culprits (reveal)

- OPK exhaustion

- Bob didn’t upload enough OPKs.

- Server ran out and hands out bundles without OPK.

- If your implementation requires OPK but doesn’t handle missing OPK, handshake fails.

- SPK rotation race

- Bob rotated SPK and deleted old private SPK too soon.

- Alice fetched old SPK, initiated, but Bob can’t compute.

- Replication lag in prekey store

- Alice hits region A, Bob uploaded to region B.

- Alice receives stale bundle.

- Clock skew / TTL misconfiguration

- Server expires SPKs early.

- Message queue delays

- Initial message arrives after Bob garbage-collected old SPK.

Restaurant analogy:

- The kitchen changed recipes and threw away yesterday’s ingredients; late orders fail.

[KEY INSIGHT] Most “handshake failed” incidents are distributed state-management bugs: replication, TTLs, rotation windows, and idempotency.

Challenge question: What telemetry would you add to distinguish “OPK missing” vs “SPK not found” vs “signature invalid”?

The KDF and context binding (where distributed metadata sneaks in)

Scenario

You want to ensure the derived SK is bound to the right identities and protocol version.

Pause and think: Why isn’t KDF(DH1||DH2||DH3||DH4) enough?

Explanation

In practice you include:

- protocol name/version (domain separation)

- identities (

IK_A,IK_B) - prekey identifiers

- maybe application context

This prevents cross-protocol attacks and key reuse across contexts.

Delivery analogy:

- Two packages may have the same lock combination by coincidence unless you include the address label and order ID when generating the lock.

[CODE: Python, demonstrate HKDF over concatenated DH outputs with context info (protocol name, IKs, prekey ids) producing SK]

[KEY INSIGHT] In distributed systems, metadata is everywhere. Bind enough context into the KDF so keys can’t be replayed across “similar-looking” handshakes.

Challenge question: What’s the risk if you omit IK_A and IK_B from the KDF context?

Threat modeling X3DH like a distributed protocol

Scenario

You’re asked: “What does X3DH protect against?” You must answer precisely.

Comparison table: attacker capabilities vs outcomes

| Attacker capability | Can learn SK? | Can impersonate Bob to Alice? | Notes |

|---|---|---|---|

| Passive network eavesdropper | No | No | Assuming DH and KDF are secure |

| Server can read/modify traffic but not client private keys | No (usually) | Sometimes | Can swap identity keys unless TOFU/transparency prevents |

| Server replays OPK / gives same OPK twice | Not directly | No | Weakens some FS/correlation properties |

| Compromise Bob’s spk_b later (after handshake) | Usually no | No | If OPK used and erased, better; without OPK, depends on model |

| Compromise Bob’s ik_b before first contact | Yes | Yes | Identity key compromise is catastrophic |

| Compromise Alice’s device after handshake | Past messages protected by ratchet | N/A | X3DH alone doesn’t provide ongoing FS |

Pause and think: Which row is the most “distributed-systems-shaped” problem rather than a pure cryptographic one?

Answer (reveal): Server swapping identity keys (key distribution) and OPK allocation consistency are deeply distributed.

[KEY INSIGHT] X3DH’s hardest problems are not elliptic curves—they’re key distribution, state, and consistency under adversarial control.

Challenge question: If you had to spend engineering budget on one improvement: transparency logs, better OPK allocation consistency, or better backup UX—what yields the biggest real-world security gain?

Prekey store design (the part your crypto paper doesn’t mention)

Scenario

You must implement the server-side “prekey service.” It’s effectively a distributed database with special semantics.

Requirements

- Store per-device bundles: IK, SPK, signature, and a pool of OPKs.

- Hand out bundles with:

- SPK always

- OPK if available

- Ensure OPKs are not handed out twice (or detect if they are).

- Support rotation and garbage collection.

- Operate under geo replication.

Mental model (vending machine)

- SPK is the “always available snack.”

- OPKs are “limited edition snacks.”

- The vending machine must not vend the same limited snack twice.

Design choices (comparison table)

| Design | OPK uniqueness guarantee | Latency | Complexity | Failure mode |

|---|---|---|---|---|

| Single-region strong consistency (linearizable pop) | Strong | Higher for distant clients | Medium | Region outage affects availability |

| Multi-region eventual consistency | Weak | Low | Low | OPK double-issue likely |

| Multi-region with per-user leader (Raft per shard) | Strong | Medium | High | Leader failover complexity |

| Client-side detection only (allow double-issue) | Detectable | Low | Low | Security degradation but service stays up |

Pause and think: If you choose eventual consistency and accept OPK reuse, what do you tell your security team?

Answer (reveal): You’re trading some forward secrecy/correlation resistance for availability. Document it, add detection/telemetry, and rely on SPK+ratchet for baseline security.

[KEY INSIGHT] OPKs turn key agreement into a resource allocation problem with consistency requirements.

Challenge question: Can you design OPKs so that double-issue is harmless? What would that require?

Why “signed prekey” exists (and why it’s rotated)

Scenario

Why not just publish identity key and a huge pile of OPKs?

Pause and think: What’s the role of SPK distinct from IK and OPK?

Explanation

- IK is too valuable to use directly for every handshake (privacy and compromise surface).

- OPKs are consumable and might run out.

- SPK provides a stable, signed intermediate key that supports asynchronous handshakes even when OPKs are exhausted.

Restaurant analogy:

- IK is the restaurant’s business license (rarely changed).

- SPK is the weekly menu signed by the owner.

- OPKs are limited coupons for extra security perks.

Rotation reasons:

- Limits exposure if SPK private key leaks.

- Constrains how long an attacker can exploit a compromised SPK.

[KEY INSIGHT] SPK is the “always-on asynchronous anchor,” OPK is the “single-use FS booster.”

Challenge question: What rotation schedule is realistic for SPK in a system with long offline devices (weeks)?

Replay, duplication, and idempotency (distributed systems meets crypto)

Scenario

The server can replay Alice’s initial message to Bob multiple times (maybe due to queue retries).

Pause and think: Should Bob accept the same initial message twice?

Explanation

Bob should make initial message processing idempotent:

- If the same

(IK_A, EK_A, SPK_id, OPK_id)arrives again, treat it as a duplicate. - If an OPK was used, mark it consumed; duplicates should not consume new state.

Delivery analogy:

- If the courier delivers the same sealed package twice due to a tracking glitch, you don’t open both and pay twice; you recognize the duplicate label.

Implementation approach:

- Maintain a cache of recently seen handshake identifiers.

- Ensure OPK consumption is transactional with message acceptance.

[KEY INSIGHT] X3DH in production needs deduplication logic just like any distributed message processing pipeline.

Challenge question: Where do you store the dedup cache on Bob’s device, and what happens if it’s evicted?

“What does the initial message contain?” (and why that matters)

Scenario

Should Alice’s first message include plaintext metadata? Should it include an encrypted payload? What if you want to hide who is messaging whom?

Pause and think: Which fields must be visible to the server for routing, and which should be encrypted?

Explanation

At minimum, server needs routing info (recipient device identifiers). But X3DH requires Bob to know:

- IK_A

- EK_A

- which prekeys were used

Many systems send these in a header that’s visible to the server, though there are designs that hide more via sealed sender / private contact discovery.

Restaurant analogy:

- The delivery label needs the address (routing), but the contents should remain sealed.

[KEY INSIGHT] X3DH’s wire format is where privacy goals meet operational constraints (routing, spam prevention, abuse handling).

Challenge question: If you encrypt IK_A from the server, how does the server do spam/abuse rate limiting without learning sender identity?

A minimal pseudo-spec (so you can implement without guessing)

Scenario

You want a crisp checklist.

[CODE: pseudocode, implement X3DH initiator and responder steps with signature verification, DH computations, HKDF, and associated data]

Key steps (initiator):

- Fetch bundle

(IK_B, SPK_B, Sig, OPK_B?). - Verify

Verify(IK_B, Sig, SPK_B). - Generate

EK_A. - Compute

DH1..DH4. SK = HKDF(salt=0, IKM=concat(DH*), info=context).- Derive

RK, CK0(root/chain keys) for Double Ratchet bootstrap. - Send initial message with header and encrypted payload.*

Key steps (responder):

- Receive initial message; parse header.

- Lookup referenced

SPK_BandOPK_B. - Compute corresponding DHs.

- Derive

SKidentically. - Delete consumed OPK.

[KEY INSIGHT] Most implementation bugs come from (a) signature verification omissions, (b) incorrect DH ordering, (c) wrong context binding, (d) state retention/rotation mistakes.

Challenge question: Which step is most likely to fail under partial replication or stale caches?

Decision game — pick your trade-offs

Scenario

You’re designing X3DH deployment for a new product.

Choose one option per row.

| Decision | Option A | Option B |

|---|---|---|

| Prekey store consistency | Strongly consistent OPK pop | Eventually consistent OPK pool |

| Identity key distribution | TOFU + safety number UI | Transparency log + witnesses |

| Multi-device model | Per-device identity keys | Shared user identity with device subkeys |

| Backup | No key backup | Encrypted key backup |

| SPK rotation | Frequent (days) | Infrequent (weeks/months) |

Pause and think: Which combination maximizes security? Which maximizes availability? Which maximizes simplicity?

Reveal (one plausible answer):

- Max security: strong OPK pop + transparency + per-device identities + careful backup + moderate rotation.

- Max availability: eventual OPK + TOFU + shared identity + backup + infrequent rotation.

- Max simplicity: eventual OPK + TOFU + per-device identities + no backup + infrequent rotation.

[KEY INSIGHT] X3DH is a protocol, but the system is a set of trade-offs across consistency, UX, and threat model.

Challenge question: If you pick eventual consistency for OPKs, what compensating controls can you add (detection, rate limits, extra DH inputs)?

Common misconceptions (and the “gotchas” they cause)

Misconception 1: “The server can’t do MITM because SPK is signed.”

Reality: The server can still do MITM on first contact by swapping IK_B itself, unless you have TOFU, out-of-band verification, or transparency.

Operational gotcha: Users rarely verify safety numbers; your system must assume first-contact attacks are possible.

Misconception 2: “OPKs are required for security; without them the protocol is broken.”

Reality: X3DH works without OPKs, but OPKs improve certain compromise scenarios. Many systems proceed without OPK if the pool is empty.

Operational gotcha: Treating missing OPK as fatal can cause availability incidents.

Misconception 3: “X3DH gives forward secrecy for the whole chat.”

Reality: Ongoing FS and break-in recovery come from Double Ratchet.

Operational gotcha: If you don’t start the ratchet correctly (bad root key derivation), you lose the security you think you have.

Misconception 4: “Key rotation is always good.”

Reality: Rotation without retention windows causes decryption failures in asynchronous networks.

Operational gotcha: Aggressive SPK rotation increases support tickets and message loss.

[KEY INSIGHT] Many security failures are misaligned mental models between protocol guarantees and system behavior.

Challenge question: Which misconception is most likely to show up as a production incident rather than a security breach?

X3DH and metadata — what leaks in distributed deployments

Scenario

Even if content is encrypted, metadata can leak:

- who talks to whom

- when

- how often

- device graph

Pause and think: Does X3DH increase or decrease metadata leakage relative to synchronous DH?

Explanation

X3DH requires retrieving prekey bundles and sending initial headers. This can create server-visible events:

- “Alice requested Bob’s bundle”

- “Alice used OPK id 123”

However, synchronous DH requires both online simultaneously, which also leaks timing and presence.

Restaurant analogy:

- Picking up a key from the front desk leaves a log entry.

Mitigations:

- Private contact discovery

- Sealed sender

- Padding and batching

- Mix networks (expensive)

[KEY INSIGHT] Asynchrony often trades content privacy for metadata observability unless additional privacy layers exist.

Challenge question: If you batch prekey bundle fetches, what failure modes does batching introduce (staleness, cache poisoning, amplification)?

Real-world usage patterns (Signal-style)

Scenario

How does X3DH appear in production products?

Patterns:

- Clients periodically upload:

- one SPK (rotated)

- a batch of OPKs

- Server returns a “prekey bundle” to initiators.

- Initiator sends a “prekey message” that includes EK_A and references.

- Responder consumes OPK and starts Double Ratchet.

Pause and think: Why upload OPKs in batches rather than one at a time?

Answer (reveal): To reduce round trips and tolerate intermittent connectivity; it’s a classic distributed systems optimization (amortize overhead).

[KEY INSIGHT] Prekey upload is a background replication workload; tune it like you’d tune any periodic heartbeat.

Challenge question: What’s the right batch size for OPKs? Consider storage, issuance rate, and offline duration.

Testing X3DH like a distributed system

Scenario

You need a test plan that goes beyond unit tests.

Test categories:

- Deterministic crypto tests: known vectors for DH/KDF.

- Property tests: both sides derive same SK for random inputs.

- Fault injection:

- delayed initial messages

- reordered rotations

- OPK duplication

- stale bundle reads

- Adversarial server simulation:

- swap IK

- swap SPK

- replay OPK

- State-loss simulation:

- delete spk_b

- delete OPK store

[CODE: Go or Rust, property-based test harness that simulates asynchronous delivery and prekey rotation windows]

[KEY INSIGHT] The most valuable tests model time (delays) and state (rotation/GC), not just math.

Challenge question: What invariants should always hold even under message duplication?

Mini-quiz (checkpoint)

Quiz: Choose all that apply

- X3DH requires both parties online simultaneously.

- OPKs are a consumable resource that benefits from strong consistency.

- A signed prekey binds to an identity key, but identity key distribution still needs trust mechanisms.

- X3DH replaces the Double Ratchet.

- Aggressive SPK rotation can reduce availability.

Pause and think...

Answers: 2, 3, 5.

[KEY INSIGHT] If you can explain why (3) is true, you understand the “distributed trust” core of X3DH.

Challenge question: Explain (in your own words) why signature verification is necessary but not sufficient.

Final synthesis — design X3DH for a hostile, unreliable world

Scenario

You’re tasked with launching E2EE messaging in three months.

- Users are often offline for days.

- You must support multi-device.

- The server is not trusted for confidentiality.

- You can’t ship a complex key transparency system in v1.

Pick a design and defend it

Design A (availability-first):

- TOFU for identity keys with safety number UI.

- Eventually consistent prekey store.

- OPKs optional (use if available).

- SPK rotation every 30 days; retain old SPKs for 60 days.

- Per-device identities.

Design B (security-first):

- Key transparency log with gossip/witnesses.

- Strongly consistent OPK pop (per-user leader).

- OPKs required.

- SPK rotation every 7 days; retain for 30 days.

- Per-device identities.

Pause and think: Which would you ship and why?

Reveal: a pragmatic answer

Many teams ship something close to Design A first, but must be explicit:

- You are vulnerable to first-contact MITM until users verify identity keys.

- You accept OPK reuse risk under replication anomalies.

- You invest in telemetry, detection, and a roadmap to transparency.

Then iterate:

- add transparency gradually

- harden OPK allocation

- improve multi-device trust UX

[KEY INSIGHT] X3DH is a cryptographic handshake, but shipping it safely is a distributed systems project: state publication, consistency, rotation windows, and trust bootstrapping.

Final challenge questions (take-home)

- If you could add only one server-side invariant to your prekey service, what would it be and how would you enforce it?

- How would you detect a malicious server swapping identity keys at scale?

- What’s your plan for SPK rotation + retention that balances security and delayed delivery?

- In multi-device, how do you prevent silent device addition while staying usable?

Appendix: Implementation notes (practical)

- Use modern curves (X25519) and a well-reviewed HKDF.

- Domain-separate KDF info strings (e.g., "X3DH" + version).

- Treat all network inputs as untrusted; verify signatures before DH.

- Keep SPK private keys until you’re confident no in-flight messages depend on them.

- Make OPK consumption atomic with successful session creation.

- Log handshake failure reasons locally (privacy-preserving) and in aggregate metrics.

[CODE: sketch of data structures for prekey bundles, including ids, signatures, and rotation metadata]

Key Takeaways

- X3DH (Extended Triple Diffie-Hellman) establishes an encrypted session between two parties — even if one party is offline

- Pre-keys enable asynchronous key exchange — the server stores public keys so the sender can initiate encryption without the recipient being online

- X3DH provides forward secrecy — compromising long-term keys doesn't reveal past session keys

- Signal Protocol uses X3DH for initial key exchange — followed by the Double Ratchet for ongoing message encryption