Synchronous and Asynchronous Communication Patterns

Synchronous and Asynchronous Communication Patterns

The fundamental choice in distributed communication — block and wait for a response, or fire-and-forget and process the result later.

📞 Synchronous vs Asynchronous Communication: The Phone Call vs Email Story

The Dinner Invitation Scenario

Imagine you're planning a dinner party. You need to invite 10 friends. Let’s see two completely different approaches:

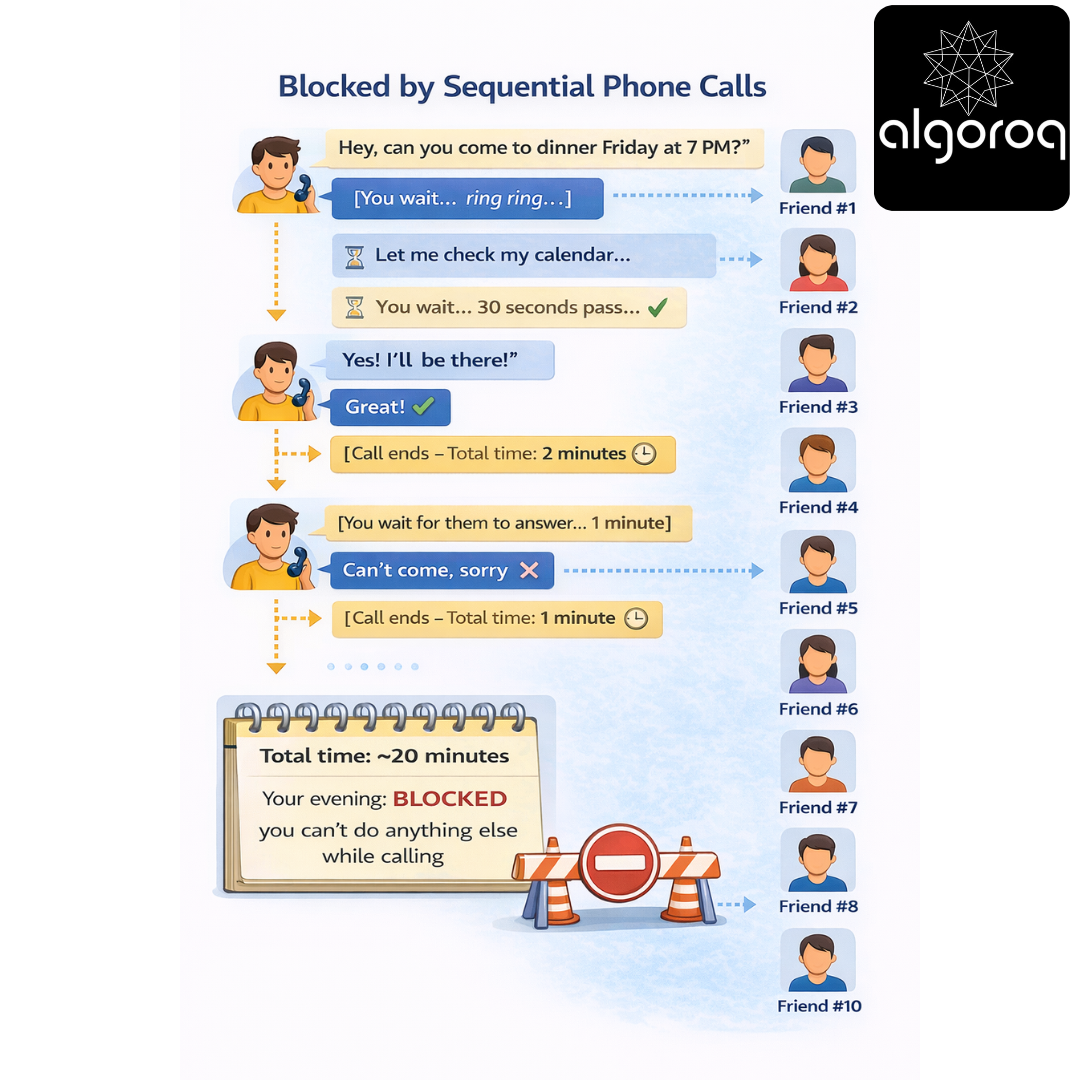

Approach 1: The Phone Call Method (Synchronous)

You pick up the phone and call Friend #1:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

You: "Hey, can you come to dinner Friday at 7 PM?"

[You wait... ring ring...]

Friend 1: "Let me check my calendar..."

[You wait... 30 seconds pass...]

Friend 1: "Yes! I'll be there!"

You: "Great!"

[Call ends - Total time: 2 minutes]

Now you call Friend #2:

[You wait for them to answer... 1 minute]

Friend 2: "Can't come, sorry"

[Call ends - Total time: 1 minute]

Continue for all 10 friends...

Total time: ~20 minutes

Your evening: BLOCKED - you can't do anything else while calling

This is synchronous communication. You make a request and wait for the response before moving on.

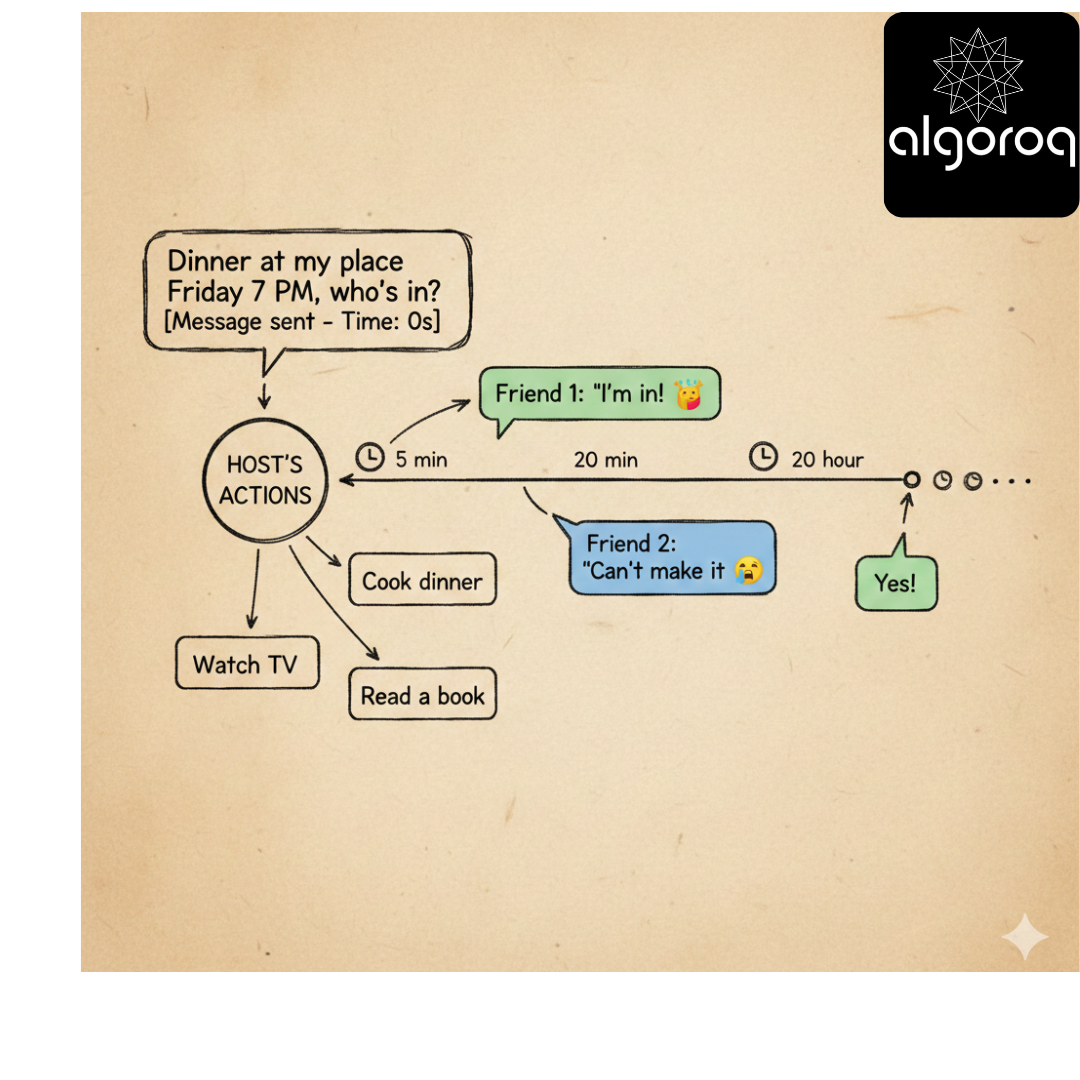

Approach 2: The Group Text Method (Asynchronous)

You send one message to group chat:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

You: "Dinner at my place Friday 7 PM, who's in?"

[Message sent - Time: 10 seconds]

Now you go do other things:

-

Cook dinner

-

Watch TV

-

Read a book

Responses come in over the next few hours:

Friend 1 (5 min later): "I'm in! 🎉"

Friend 2 (20 min later): "Can't make it 😢"

Friend 3 (1 hour later): "Yes!" ...

Total time: 10 seconds of YOUR time

Your evening: FREE - you're not blocked waiting

This is asynchronous communication. You make requests and continue with your life. Responses arrive whenever they're ready.

Let's Connect This to Real Systems

Now, let’s see how this plays out in software:

Synchronous Example: Traditional Web Request

User's Browser → Your Server

━━━━━━━━━━━━━━━━━━━━━━━━━━━━

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ Total time: 250ms

Server was BLOCKED for 200ms doing nothing but waiting!

Connection to TCP: Remember the TCP article? When your application makes a synchronous HTTP request, it's using TCP underneath. The TCP 3-way handshake happens, data flows, acknowledgments are sent—all synchronously! Your code waits for each step to complete.

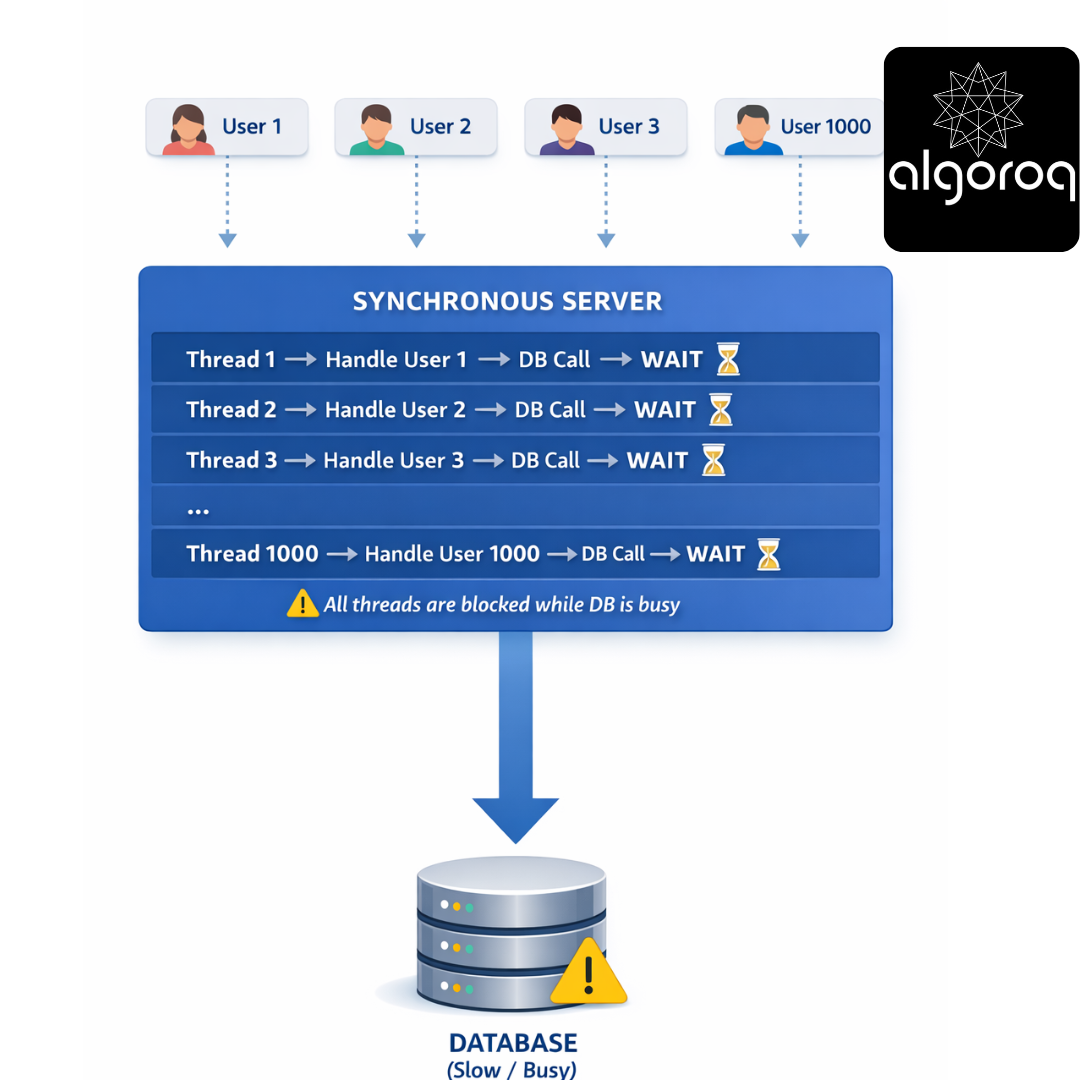

The Problem at Scale:

Scenario: 1000 users click simultaneously

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Synchronous Server:

Result: Server runs out of threads! User 1001: "Server unavailable" ❌

Asynchronous to the Rescue

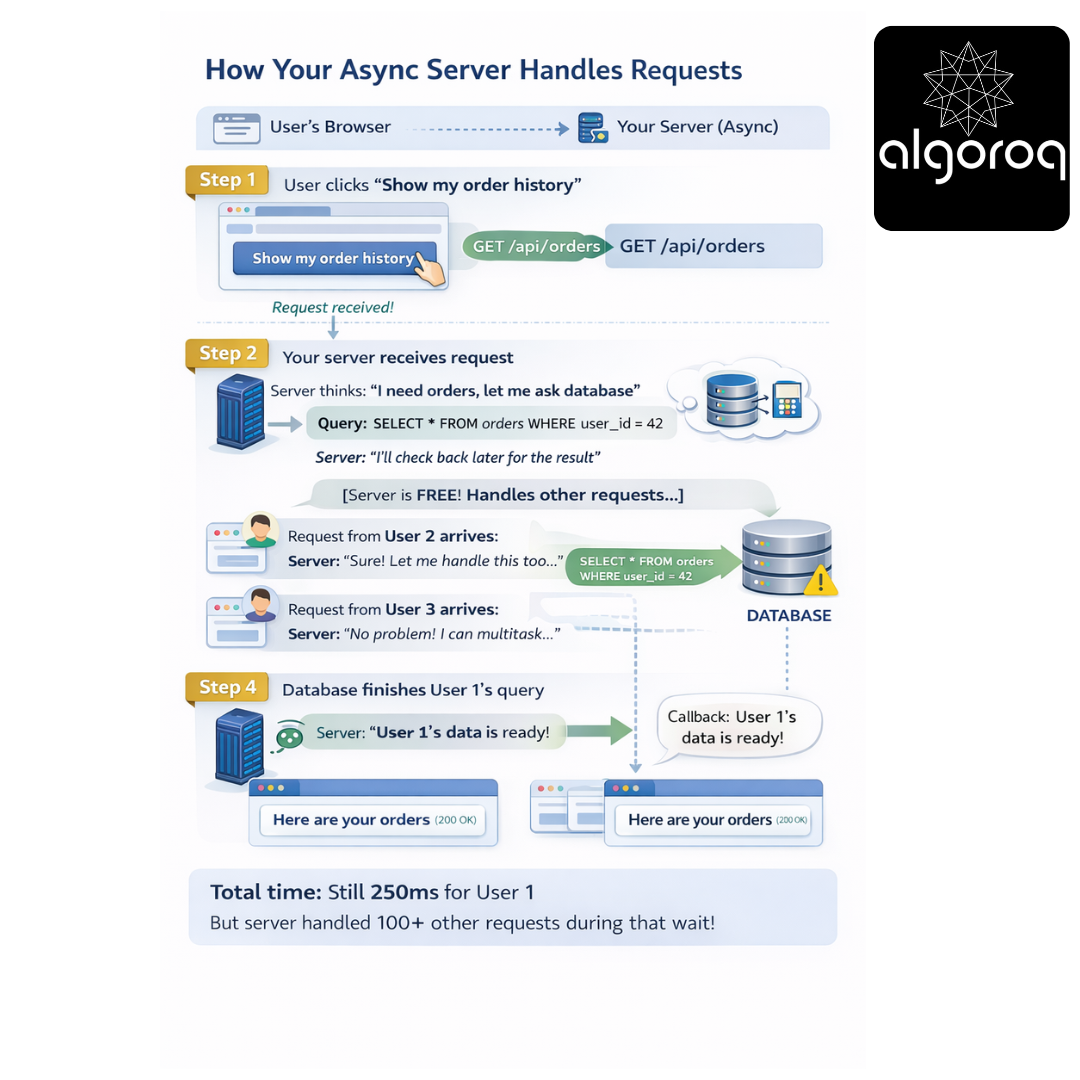

Asynchronous Example: Modern Web Request

User's Browser → Your Server (Async)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Step 1: User clicks "Show my order history" Browser: GET /api/orders

Step 2: Your server receives request Server thinks: "I need orders, let me ask database"

Step 3: Server → Database (non-blocking) Query: SELECT * FROM orders WHERE user_id = 42 Server: "I'll check back later for the result"*

[Server is FREE! Handles other requests...]

Step 4: Database finishes User 1's query Callback: "Hey Server, User 1's data is ready!"

Step 5: Server sends response to User 1 Server → Browser: 200 OK, here are your orders

Total time: Still 250ms for User 1 But server handled 100+ other requests during that wait!

Visual Comparison:

Synchronous (Phone Calls):

━━━━━━━━━━━━━━━━━━━━━━━━━━

Timeline:

0s Call Friend 1 ─────► Wait ─────► Response (2s)

2s Call Friend 2 ─────► Wait ─────► Response (4s)

4s Call Friend 3 ─────► Wait ─────► Response (6s)

Total: 6 seconds, sequential

Asynchronous (Text Messages):

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Timeline:

0s Text Friend 1 ► (go do other things)

0s Text Friend 2 ► (go do other things)

0s Text Friend 3 ► (go do other things)

[You're free to do anything!]

2s Friend 1 responds ◄ 4s Friend 2 responds ◄ 6s Friend 3 responds ◄

Total: 0 seconds of YOUR blocked time!

Real-World System Examples

Let’s see where you've seen each pattern:

Synchronous Communication Examples:

- Traditional REST API Calls

━━━━━━━━━━━━━━━━━━━━━━━━━━━━

Your code WAITS for the response before continuing.

- Database Queries (traditional)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

- Remote Procedure Calls (RPC)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

// Order Service: queue.send("process_order", orderData) response.send("Order received!") // ← Immediate!

// [Order Service moves on to other work]

// Payment Service (later): message = queue.receive() processPayment(message) queue.send("order_processed", result)